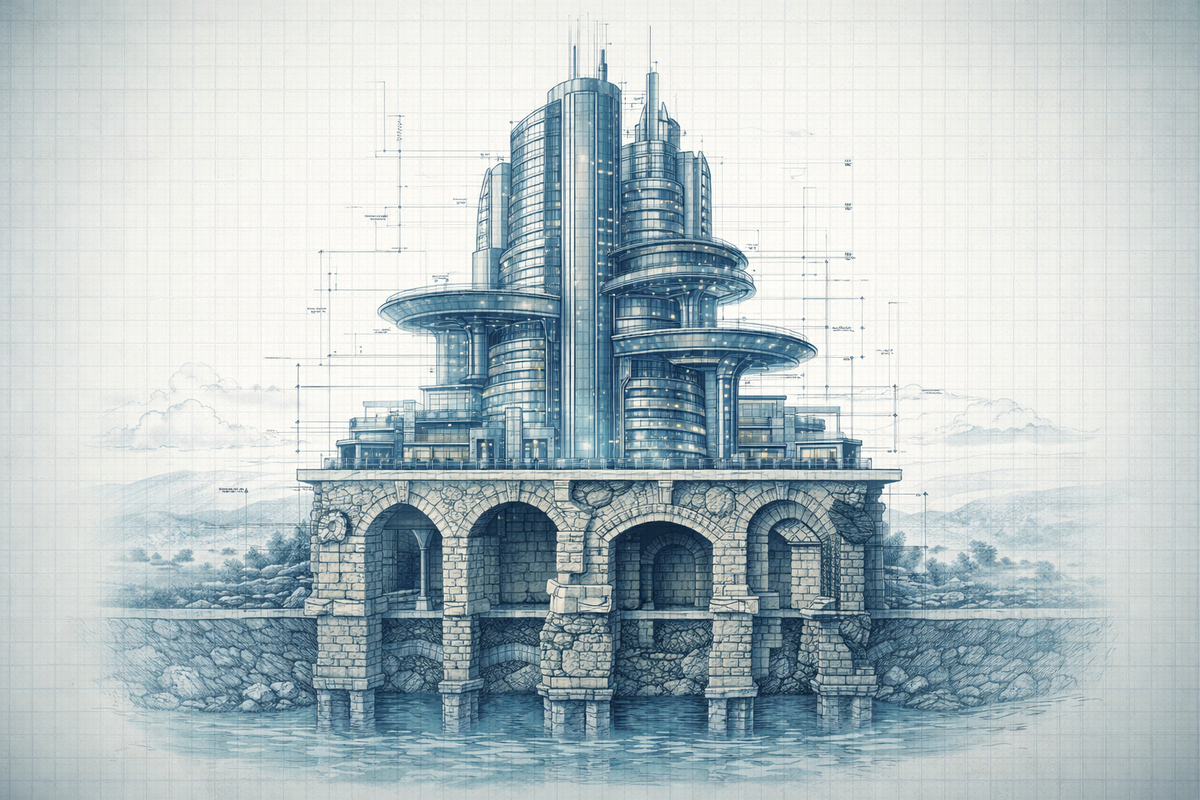

Old Rules Still Apply: Traditional Engineering Practices Matter More in the Age of AI Coding

For most of my career as a software engineer, I’ve tried to follow the practices that make engineering sustainable: writing tests, keeping changes small, reviewing carefully, documenting intent.

Recently, while reflecting on how my way of writing code has changed with the introduction of AI coding tools, I realised something interesting: many of the practices that guided good engineering for years have become even more important.

AI hasn’t replaced them. It has amplified their importance.

Another shift I’ve noticed concerns documentation. For a long time, there was a strong idea in engineering that good code should explain itself. A well-written function, with clear naming and structure, shouldn’t need additional explanation. Comments were sometimes treated as a code smell, something that appeared when the code itself wasn’t clear enough.

There is still a lot of truth in that principle. Clear code remains essential. But documentation is starting to play a new role.

It is no longer written only for humans. It is increasingly written for machines.

Well-structured comments, clear docstrings, and explicit descriptions of behaviour help AI tools understand the intent of the code they are modifying or generating. What used to be optional context for human readers has become a powerful signal for AI assistants trying to reason about a codebase.

This made me realise something: the old engineering rules aren’t becoming obsolete in the age of AI-assisted development.

If anything, they are becoming load-bearing.

Let's take a practical example: a developer uses Cursor to generate a data processing function. The code is clean, well structured, properly typed, and even has sensible variable names. It passes a quick review and gets merged.

Two weeks later: a production incident.

The AI-generated code handled the happy path beautifully but silently corrupted data on edge cases no one thought to check. No tests caught it because none were written. No reviewer caught it because the code looked right.

This story is becoming common. And it reveals something important about working with AI coding assistants: they’re force multipliers. They amplify whatever practices you already have.

Good discipline plus AI equals exceptional velocity.

No discipline plus AI equals a faster path to technical debt.

The old rules aren’t obsolete. They’re load-bearing.

Several practices that have kept codebases healthy for decades, such as Test-Driven Development, incremental delivery, and documentation-first approaches, aren’t relics of a slower era. They’re the guardrails that make AI-assisted development actually work.

Test-Driven Development: Define Correctness First

Tools like GitHub Copilot and Claude Code generate plausible code that often handles the obvious cases while quietly missing edge cases. The code compiles. It runs. It even looks elegant. But it wasn’t written with your specific constraints in mind.

TDD changes the order of the conversation.

You define correctness before asking AI to generate anything. The tests become a contract the AI must fulfil, not an afterthought you hope will catch bugs.

When you write tests first, you can let AI iterate on implementations until they pass. Using tools like Claude Code that can run tests and refine their output automatically. But this only works if the tests exist.

Without TDD, you’re trusting AI’s judgement about what “working” means. With TDD, you’re telling it.

Incremental Delivery: Keep Changes Reviewable

AI makes it tempting to generate entire features at once. Why write a function when you can prompt for the whole module? Why build incrementally when the AI can scaffold everything in one shot?

Today, many engineers also rely on AI to review code. These tools can catch obvious issues quickly and help analyse large changes faster than a human reviewer could. But even with AI assistance, large AI-generated change sets remain difficult to evaluate with confidence.

Your eyes glaze over by the third file. The code looks reasonable. The AI review passes. You approve it. Bugs ship.

Small increments keep each contribution, whether written by AI or reviewed with AI, understandable and reversible.

Generate a function, verify it works, commit. Generate the next piece, verify, commit. The feedback loop stays tight. AI tools can analyse changes more reliably, and human reviewers can still apply judgement where it matters. You catch drift early, before it compounds into a production incident buried under 2,000 lines of generated code you never really understood.

The velocity gain from AI isn’t about generating more code faster. It’s about maintaining quality while moving faster. Incremental delivery is how you do that.

Documentation-Driven Development: Clear Intent, Better Output

Writing documentation before code forces you to articulate what you’re building.

What problem does this solve? What are the inputs and outputs? What should happen when things go wrong?

This is exactly what AI needs: clear intent.

Vague prompts produce vague code. “Build a user service” gets you something generic. A well-written spec, with defined behaviour, edge cases, and constraints, becomes the prompt.

The documentation is the thinking; the code is merely the output.

Engineers who document first give AI better instructions and catch design flaws before any code is generated. They spend less time wrestling with AI output that missed the point, because they made the point clear from the start.

Traditional Engineering Practices Matter More in the Age of AI Coding

Several of these practices share something fundamental: they’re about defining intent before generating code.

- Tests define correctness.

- Small increments define scope.

- Documentation defines purpose.

AI is extraordinarily powerful at generation. But it has no intent of its own. It doesn’t know what you’re trying to build, what trade-offs matter in your context, or what “done” looks like for your team.

These, and other "traditional" engineering practices supply what AI lacks.

The engineers who thrive in this new era won’t be those who abandon discipline for speed. They’ll be the ones who recognise that AI makes discipline more valuable, not less.

The old rules still apply, they just have a new reason to exist.