Software is a Commodity. Data is the Moat.

There's a question every technology company should be asking itself right now: if AI can write code, what exactly are you selling?

For years, the software industry operated on a simple premise — the hard part was building the product. Writing the logic, wiring up the integrations, shipping a product that didn't fall over. That took time, talent, and capital, and those barriers made imitation expensive. But that is changing fast. The cost of producing software is collapsing. AI coding tools mean that features that once took a sprint can be prototyped in an afternoon. What previously required a team of engineers can now be scaffolded by one. The code itself is becoming cheap.

This is not a threat to good software companies. It's a filter. Because the companies that were always just selling code will now be exposed. And the ones built on something deeper — proprietary data, network effects, real operational embeddedness — will pull further ahead.

At Opply, we've been thinking hard about this shift. And the more we look at it, the more we believe we're in an unusually strong position.

Why data compounds in ways that code doesn't

Software can be replicated. Data cannot — at least not the kind that matters.

There are really two types of data in the world. The first is data you can buy or scrape: public commodity prices, customs records, market reports. Useful, but available to everyone. The second is proprietary operational data — the kind generated by running real transactions, real supplier relationships, real businesses. That data is sticky. It accumulates. And crucially, it gets better the more of it you have.

When you understand how a particular ingredient moves through a supply chain — which suppliers consistently deliver on time, which buyers tend to delay payment in Q4, what price signals typically precede a shortage — you can make predictions. You can surface risks before they land. You can recommend the right supplier before anyone asks. The intelligence compounds.

This is the nature of network data. Every order processed, every supplier communication logged, every invoice matched — all of it becomes signal. The platform gets smarter not because the engineers rewrote it, but because the data got richer.

The CPG supply chain is a data desert

Here's what makes this particularly interesting in the consumer goods space: CPG brands are drowning in operational complexity, but starved of useful data about it.

The average emerging food or drink brand is managing dozens of suppliers across ingredients, packaging, and co-manufacturing. Pricing is negotiated ad-hoc. Supplier performance is tracked in spreadsheets, if at all. Decisions about what to order, when, and at what price are made largely on instinct and institutional memory. There's no clean record of what was ordered, what was promised, and what actually arrived.

This isn't a technology failure — it's a data infrastructure failure. The operations exist, but the data flywheel hasn't started spinning.

Where Opply sits

Opply was built to be the operational layer for CPG brands — the place where sourcing, ordering, supplier management, payments, and inventory all converge. That positioning was always about removing friction. But increasingly, it's also about what that central position generates.

When you're handling every order from creation to delivery, managing all supplier communications, linking every invoice to its originating order, and doing this across hundreds of brands and thousands of supplier relationships — you accumulate a very particular kind of intelligence. You start to see patterns that no individual brand could ever see alone.

Which ingredient categories are seeing price pressure right now, across the whole network? Which suppliers are consistently late, and by how much? What does a healthy payment pattern look like for a brand at a given revenue stage versus one that's heading towards difficulty? What does a full ingredient spec look like for a product in a given category?

This is the proprietary layer. It's not something a brand builds on their own. It's something that emerges from operating at network scale — and it becomes more valuable with every customer who joins.

Every feature makes the next one smarter

The reason this data flywheel matters isn't abstract. It has a direct bearing on the product we're building.

The features we're most excited about — live inventory intelligence, margin visibility without waiting for month-end, supplier matching based on a product spec, a supply chain that anticipates reorders before they're needed — none of these are possible without clean, structured, longitudinal data. They're not just software features. They're emergent capabilities that only exist because of the data underneath them.

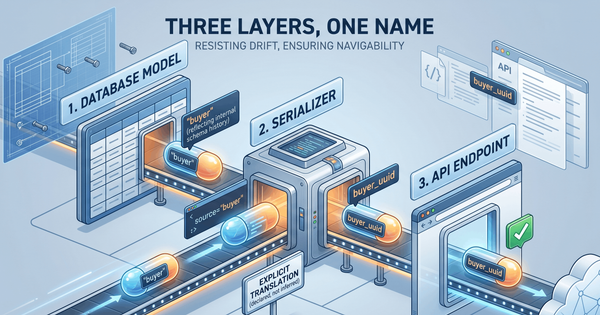

That's why we think about our roadmap differently to a standard SaaS product roadmap. Each feature we ship doesn't just add capability — it creates a new data layer that makes the next feature better. The ops automation running today feeds the inventory intelligence coming next. The inventory intelligence feeds the margin analysis after that. The whole stack gets smarter over time, not just bigger.

The shift in what matters

We're entering a period where the question for any software business is no longer "can you build it?" — it's "do you have the data to make it genuinely better than anything else?"

For Opply, the answer is increasingly yes. We sit at the centre of CPG operations, not as a peripheral tool but as the place where the business actually runs. The data that position generates is proprietary, accumulating, and becoming the foundation of every intelligent feature we build.

Software is a commodity. Data, increasingly, is not. And in a market where code gets easier to write every month, the companies that have spent years building the right data infrastructure are the ones who will be very hard to catch.